Introduction: The Bridge Between Intent and Execution

It has been a few weeks since our last edition, where we presented the architectural blueprint (the “What”) for taming the beast: a centralized Q2C Orchestration Hub that uses a “Control System” to govern a financial AI Agent, ensuring it is intelligent but never creative with pricing.

That blueprint was vital, but a blueprint doesn’t process orders. Today, we move from architecture to engineering (the “How”).

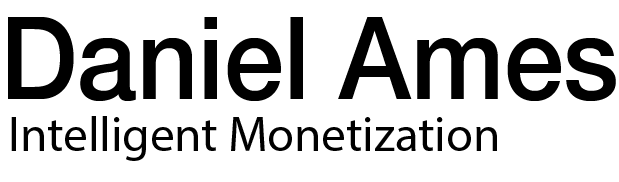

We are defining the Integration Protocol: the rigorous technical contract that allows this creative AI Agent to speak with rigid systems of record (ERP, BRIM, Catalogs). We will see the full cycle: how the Agent securely queries data to make decisions, and how the Hub imposes the rules to guarantee a “zero-touch” transition from Quote to Order.

1. The Universal Challenge: Moving Beyond Simple Translation

The fundamental disconnect in Q2C isn’t just linguistic; it’s about intelligent selection based on context.

- The Sales World (and the AI Agent) thinks in goals: “We need an aggressive offer to win a new logo,” or “We need to renew this existing client while preventing them from churning due to overage costs.”

- The Financial World (ERP/BRIM) only understands rigid data structures: Customer ID [X], Material SKU [Y], Condition Type [Z].

Our Goal: The AI Agent must act as an Intelligent Advisor. It needs to understand the sales goal, consult the data reality (catalog or usage history) in a governed manner, decide the optimal configuration, and finally, format that decision into the unimpeachable dialect required by the ERP.

2. The Human Experience: Intelligent Sales in Action

Before looking at the engine, let’s experience the result. What does this protocol look like in action for our two main scenarios?

As seen above, this is what the end-user experiences: a natural language conversation where complex intent is understood and validated in real-time.

Scenario A: The New Customer (Aggressive Acquisition)

- Sales Rep (Chat): I need a quote for a large new prospect (500 users), “Temp Prospect 998”. We need an aggressive introductory offer to win the deal this quarter. What is the best option?

- AI Co-pilot: (Performs governed queries to the catalog behind the scenes)… Based on the size and the need for an aggressive entry, I recommend the “Gold Enterprise Bundle”. To make it aggressive, I can apply the approved “New Logo Year-1 Accelerator” 10% discount. Shall I proceed?

- Sales Rep: Yes, go ahead.

Scenario B: The Existing Customer (Predictive Renewal)

- Sales Rep (Chat): It’s time to renew Customer XYZ (ID 0001098765). Based on their actual usage last year, what is the best plan to avoid them having excessive costs (overages) and getting upset?

- AI Co-pilot: (Performs governed queries to usage history and master data)… Their data shows they consistently exceed their current plan by 20%. To prevent future “Bill Shock,” I recommend upgrading them to the “Platinum Usage Plan”. I will also apply their validated “Level 2 Loyalty Discount” from master data. Shall I prepare the quote?

- Sales Rep: Yes, please.

3. The Missing Link: Reasoning and Governed Queries

How did the AI know what to recommend? It didn’t guess, nor did it have free reign over the database.

The Agent used governed “Read Tools” to query systems of record securely. These tools act as the Agent’s “eyes,” allowing it to see external data without risk.

Let’s look at the “behind the scenes” execution flow for Scenario A:

Step 3a: Intent and Governed Query (Input)

The Agent recognizes the need (“aggressive solution, Enterprise, >500 users”) and uses a defined read tool (e.g., CatalogSearch_ReadOnly) to send a structured, secure query to the backend.

Step 3b: Raw Data Retrieval (Output)

The system responds with the irrefutable facts in JSON format. The AI uses its native data literacy to semantically understand this response.

Step 3c: The Decision (Synthesis)

The AI analyzes the data. It determines that the “Gold” package is the best fit for an “aggressive” offer and pairs it with the valid “New Logo” discount rule. It has now made a grounded decision and is ready to execute.

4. The Architect’s Solution: The Governance Protocol (Tool Definition)

The AI Agent has made an intelligent decision, but now it needs to cross the border into the transactional world. The Q2C Orchestration Hub does not allow it to simply “send” that decision to the ERP freely.

This image visualizes the core architectural concept: the “Tool Definition” acts as a strict governance gate that filters and structures the AI’s creative intent before it touches the ERP.

Our architectural solution is the “Write Tool Definition” (Transactional). These are the rigid rules the Hub imposes, acting as the Agent’s governed “hands,” forcing it to structure its decision into a validated format before it can touch the ERP.

Visualizing the Governance Protocol (The Transactional Tool Definition):

5. The Result: Flawless Execution Payloads

Because the AI followed the full cycle—(1) Governed Data Query -> (2) Intelligent Decision -> (3) Governed Execution via Tool Definition—the final result is a pristine data object ready for the ERP.

Final Payload – Scenario A (New Customer): The AI selected the SKU and the specific discount rule based on the retrieved catalog data.

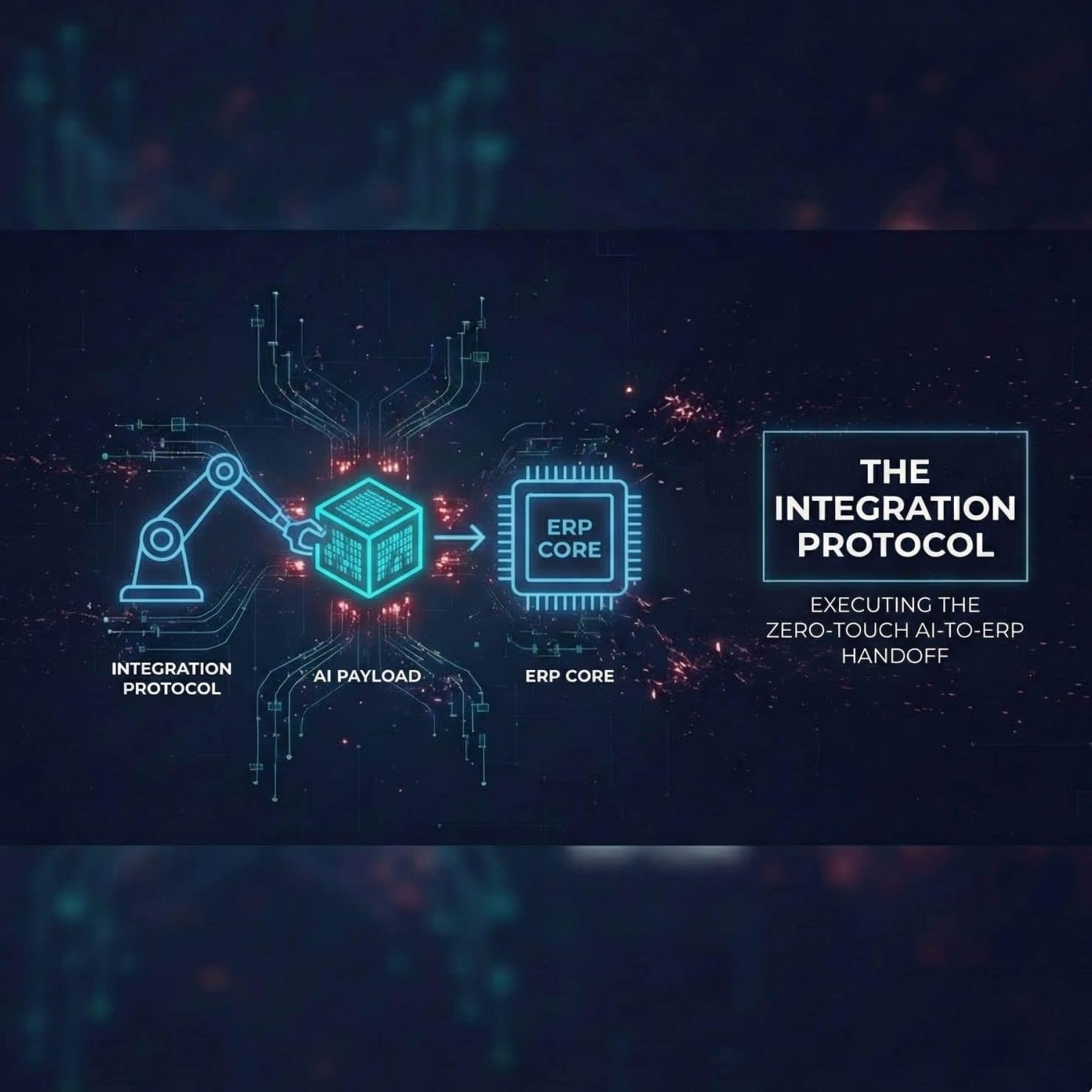

Conclusion: Closing the First Loop, Opening the Second

With this technical integration protocol now defined, we have formally closed the loop on the first “drainpipe” of revenue leakage identified in our inaugural article: Error-Riddled Quotes.

We have traveled the full path to solve the problem of sales teams lacking real-time, unified data. We diagnosed the initial “cost of chaos” (Art. 1), established an AI-driven vision (Art. 3), defined the strict architectural controls (Art. 4), and finally, established the execution protocol (Art. 5). We now possess a complete, end-to-end blueprint for Intelligent, Zero-Touch Quoting. The contract is signed flawlessly. The order is booked perfectly in the ERP. The first major architectural challenge is solved.

But the monetization lifecycle has only just begun. We now move to the second major challenge.

Especially in complex scenarios like Scenario B (Predictive Renewal), we just sold a plan based on the promise of a certain level of usage. What happens tomorrow when the customer starts actually using the service?

How do we ensure the chaotic technical reality of sensors, networks, API calls, or platforms exactly matches the rigid financial promise of the newly signed contract? If these don’t align, we fall immediately into the second drainpipe identified in our first article: Billing System Blind Spots.

Next Week: We move from the sales office to the engine room to tackle this second challenge. We will dive into the critical role of Convergent Mediation—the data refinery that stands between the network and the ERP, ensuring that what you measure is exactly what you bill.