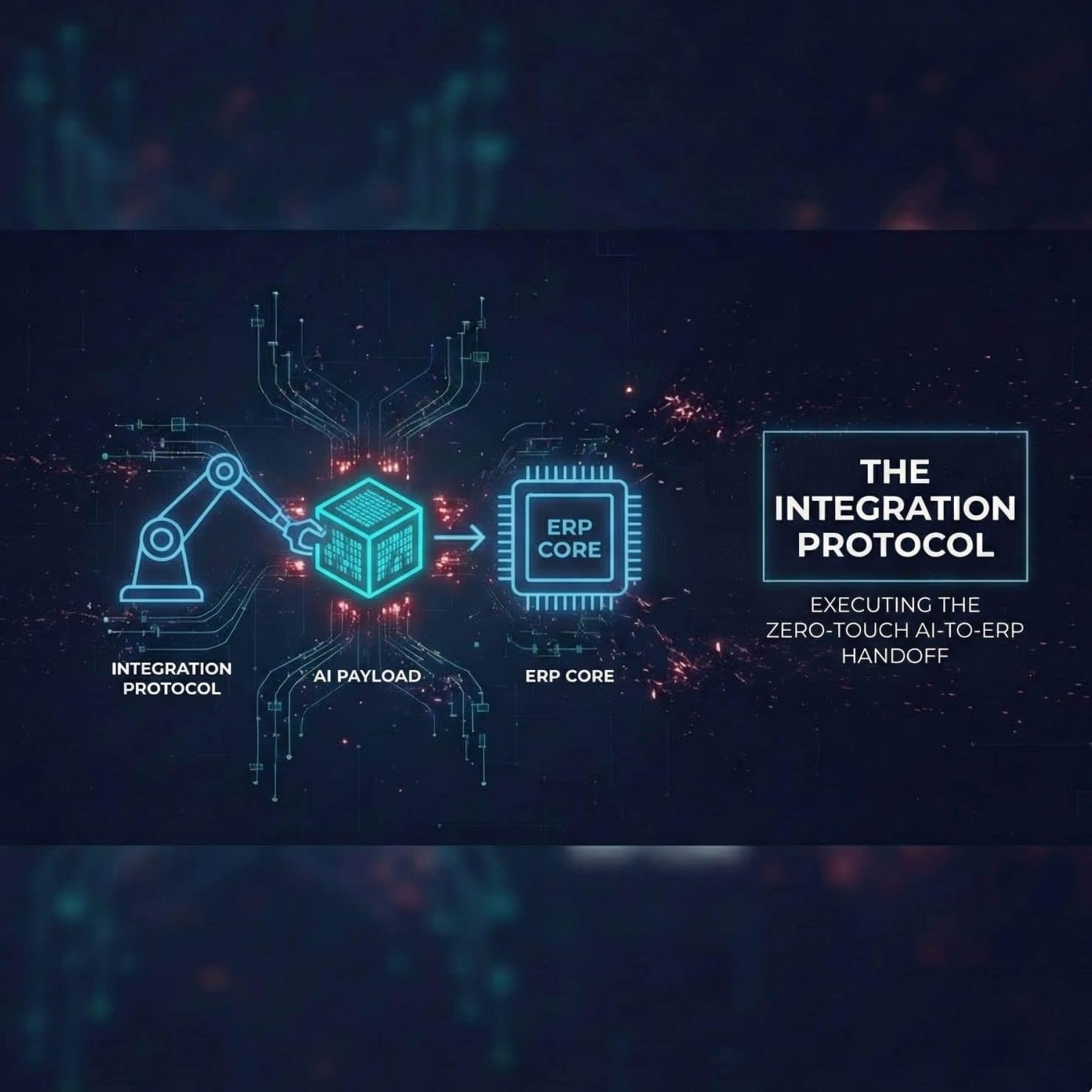

Last week, in our second edition, Stop the Bleeding Before the Ink Dries, we isolated the Initial Drain: the “Blind Quote” launched by the Sales team (CRM/CPQ) without visibility into the Billing reality (ERP/BRIM). Today, we move past simple automation.

- Preventing the “Structure Trap” when creating offers for new customers.

- Avoiding “Bill Shock” for existing customers through predictive analysis.

The reaction from the community was immediate:

“We love the vision, but our CFO and billing teams are terrified. How do we trust an AI, famous for being ‘creative,’ with our financial integrity?”

This is the right fear to have. In monetization, a 95% accuracy rate isn’t an achievement—it’s a regulatory disaster. We cannot allow an LLM to hallucinate prices or invent discount rules.

The solution isn’t to remove intelligence, but to cage it. We need AI’s predictive capability to understand behavior, but we need ironclad control to ensure it never invents a price.

Today, we move from the “what” to the “how.” Enough with the vision pieces. This article is an engineering blueprint. We are defining the strict architectural constraints—specifically based on Retrieval-Augmented Generation (RAG)—required to force a creative AI to adhere to rigid financial compliance.

The Fear Factor: A Critical Pivot on “Training”

Before getting into the blueprint, I must address an uncomfortable truth that emerged when moving from diagram to engineering reality.

There is a very common misconception in enterprise AI—one we must address head-on: “To make the AI understand our catalog and billing rules, we need to train or fine-tune the model with our data.”

This sounds intuitive, but in finance, it is almost always a mistake. Fine-tuning is expensive, slow to update when prices change, and critically: it does not guarantee the model won’t invent things outside the rules.

The solution isn’t to force the AI to memorize your rulebook. The solution is Grounding—often implemented via RAG (Retrieval-Augmented Generation).

You don’t want the AI to be your “New Pricing CEO,” making decisions based on hazy memories. You want it to be an expert librarian. It doesn’t write the books; it knows exactly which shelf the approved rulebook is on, retrieves the precise page in real-time, and never invents new chapters

The Missing Piece: From Passive Search to Agentic AI

Grounding is the goal, and the common technical technique to achieve it is RAG (Retrieval-Augmented Generation). But RAG alone is a passive search mechanism. To handle complex Q2C workflows, a librarian isn’t enough; we need an orchestrator.

We need to move toward Agentic AI.

Unlike a standard LLM that simply responds to a prompt, an Agentic AI system in enterprise finance is designed to reason, plan, and actively use “tools” to complete a mission.

Crucially, in our architecture, RAG is not the whole system; RAG is the primary tool the Agentic AI is mandated to use. The agent is the pilot; RAG is the mandatory instrument panel that prevents it from flying blind.

Let’s see this agentic architecture in action.

The Blueprint in Action: Two Scenarios, One Source of Truth

Let’s apply this technical grounding to the two scenarios we established last week.

Scenario A: New Customers – Stopping the Structure Trap

The Challenge: A sales rep asks the AI for something complex: “Give this new enterprise client the ‘Gold Bundle,’ but swap standard support for premium, add a 12% discount for the first six months, and bill it quarterly.”

The Hallucination Risk: If left unchecked, an AI might happily invent a non-existent “Hybrid-Platinum-Gold-Quarterly-Special” SKU, creating a contract your ERP will reject instantly.

The Architectural “How” (Agentic AI + Tools):

- Agentic Reasoning & Intent: The AI Agent analyzes the request to understand the necessary workflow: Bundle → Swap → Discount → Billing Frequency.

- Mandatory Tool-Use (Grounding via RAG): The Agent recognizes it cannot invent pricing. It triggers its RAG tool to consult the official source of truth (e.g., your SAP BRIM/SOM product catalog) via real-time API.

- Deterministic Validation: The Agent compares the request against the retrieved rules. (e.g., “The catalog says the ‘Gold Bundle’ allows support swaps, but the maximum allowable discount for this segment is 10%, not the requested 12%.”).

- Structured Output: The Agent returns a pre-validated, structured quote ready for the ERP without surprises, or an informed correction to the sales rep.

The Result: Creativity at the edge, compliance at the core.

Scenario B: Existing Customers – The “Garbage In, Garbage Out” Reality

The Challenge: You want predictive analysis to prevent “bill shock” from overage charges.

The Risk: Predictive models are useless if consumption data is dirty. The error is usually not in the AI, but in the fuel.

The “How” (The Data Refinery + Agent): Before financial AI can make a reliable prediction, Convergent Mediation (CM) must act as the refinery:

- Raw Ingestion: CM receives billions of chaotic usage events.

- The Refinery Process: It filters duplicates, normalizes units of measure, enriches records with customer context, and aggregates sessions.

- The Clean Fuel: CM creates the “Golden Financial Data Record.”

Here is where prediction meets rigidity. With clean data, a specialized ML Forecast Engine can predict future behavior (e.g., usage volume). However, to “tame the beast,” the central AI Agent cannot invent the price for that forecast usage. It must use its RAG tool to retrieve exact, approved pricing rules from your current catalog and apply them to the forecast.

The Architect’s Solution: The System of Control

To secure the Q2C Nervous System we defined previously, we must evolve its central brain. We no longer visualize this as free-floating Generative AI, but as a tightly governed Q2C Orchestration HUB.

This HUB operates under a strict “chain of thought.” It understands intent, but is architecturally forbidden from generating a financial number without first using its RAG tools to retrieve the approved rule from your systems of record.

It does not decide. It does not invent. It consults, verifies, and returns. This is the blueprint for a financial AI brain that is intelligent enough to understand, but disciplined enough to follow the rules.

- Raw Ingestion: CM receives billions of chaotic usage events.

- The Refinery Process: It filters duplicates, normalizes units of measure, enriches records with customer context, and aggregates sessions.

- The Clean Fuel: CM creates the “Golden Financial Data Record.”

Conclusion: We Have Tamed the Beast (and Cut the Tax)

This is no longer an abstract vision. It is a reproducible, auditable architecture ready for production.

We have tamed the beast by ensuring AI does not replace your financial core; it empowers it with intelligent interfaces. The logic and rules remain strictly governed by your catalogs, your product systems, and your Data Layer.

Why does this matter? Because a financial AI that hallucinates isn’t an asset; it’s an accelerant for the “Invisible Tax.” Every invented discount or misread usage log is revenue leakage waiting to happen. By grounding the AI, we are structurally removing enormous sources of friction and error from the Q2C nervous system.

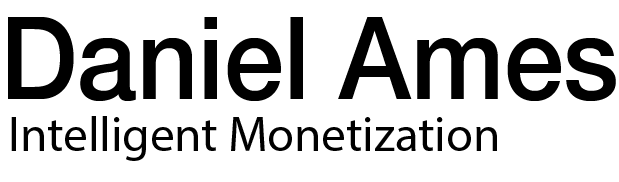

With a technically sound, non-hallucinating quote in hand, the next challenge appears: the execution handoff.

Next Week: The Integration Protocol. Today, we defined the Architectural Blueprint. Next week, we open the engine block to execute the Technical Integration. How does an Agentic AI actually ‘talk’ to your ERP? We will dive into the exact JSON payloads and tool definitions—required to ensure the critical handoff from Quote to Order is zero-touch and flawless.

Your Turn: Is the fear of AI “hallucinations” (and the tax penalties they create) holding your organization back from adopting it in financial processes? Let’s discuss the role of “grounding” in the comments.